The air in a modern data center doesn't feel like progress. It feels like a refrigerator. Walking through the aisles of a high-security server farm, you are surrounded by a relentless, industrial hum—the sound of billions of private thoughts, corporate secrets, and proprietary algorithms being processed at the speed of light. This is where the physical world meets the digital one. And right now, this cold, sterile environment is the front line of a heated legal battle that could redefine who owns your digital life.

Microsoft, a titan that once defined the closed-source era, has found itself in an unlikely position: standing as a shield for a competitor. The conflict centers on Anthropic, an AI safety and research company that represents the new guard of artificial intelligence. But this isn't a simple story of corporate rivalry. It is a story about the reach of government power, the sanctity of the cloud, and the increasingly blurry line between national security and overreach.

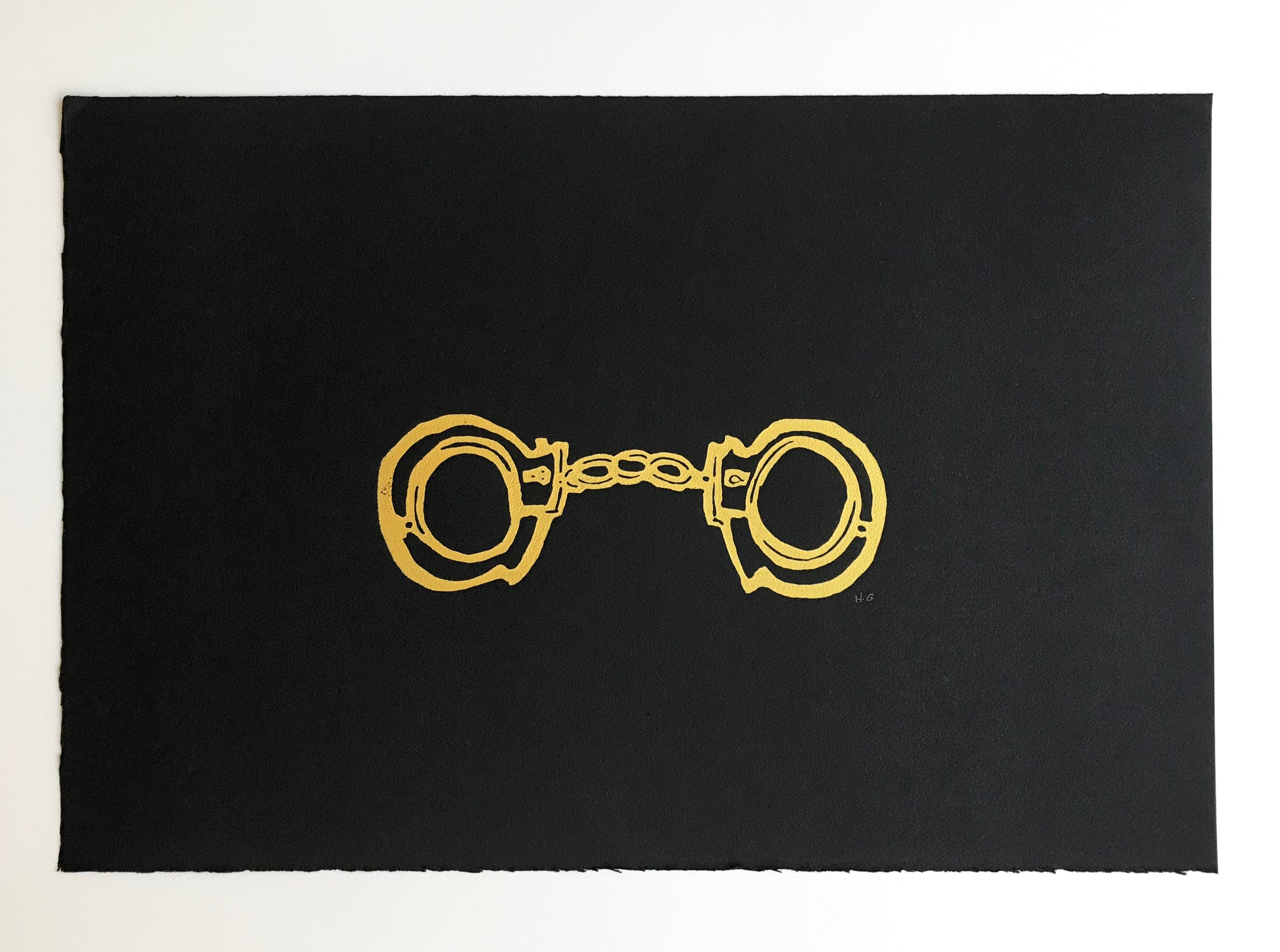

When the Trump administration moved to compel access to internal data and structural secrets within the AI industry, they framed it as a matter of protecting the American lead in the global tech race. On paper, it sounds logical. In practice, it looks like a skeleton key.

The Architect and the Algorithm

Consider a hypothetical engineer named Sarah. Sarah has spent three years developing a "constitutional" framework for an AI model—a set of internal rules that prevent the machine from generating instructions for bio-weapons or spreading hate speech. This framework isn't just code. It is the intellectual soul of her company. It is what makes their product safe, marketable, and unique.

One morning, Sarah arrives at work to find a government mandate on her desk. The order doesn't just ask for a list of users. It demands a look under the hood. It wants the weights, the biases, and the training methodology. The administration argues that because AI is a "dual-use" technology—meaning it can be used for both civilian and military purposes—the state has a right to oversee its most intimate mechanics.

Microsoft stepped into this fray not because they are particularly fond of Anthropic’s market share, but because they recognize a dangerous precedent. If the government can force its way into Anthropic’s servers today, Microsoft’s own Azure cloud—which houses the data of hospitals, banks, and millions of private citizens—is no longer a vault. It becomes a glass box.

The stakes are invisible until they aren't. We often treat "the cloud" as a metaphorical ether, but it is a physical place subject to the laws of the land. When those laws begin to treat software development like the enrichment of uranium, the culture of innovation withers.

The Ghost in the Regulatory Machine

The administration’s push is rooted in a philosophy of "Technological Protectionism." The logic follows a specific path: AI is the new electricity. Whoever controls the most powerful AI controls the global economy. Therefore, the government must ensure that this "electricity" doesn't leak to adversaries or evolve in ways the state cannot monitor.

But software isn't like plutonium. You can't track it with a Geiger counter. It lives in the minds of developers and the architecture of neural networks.

By demanding deep access to Anthropic’s proprietary systems, the administration is essentially asking for a seat at the developer’s table. This creates a friction that few outside the industry truly feel. Imagine trying to write a novel while a government censor sits behind you, reading every half-finished sentence and demanding to know the "national security implications" of your protagonist's choices. You wouldn't write a better book. You would likely stop writing altogether.

Microsoft’s intervention highlights a fundamental truth about the digital age: Trust is the only currency that actually matters. If a company cannot guarantee that its customers' data is shielded from arbitrary government seizure, that company loses its right to exist in a global market. Why would a European startup or a Japanese bank use an American cloud service if they know the U.S. government has a back door?

A History of Broken Vaults

This isn't the first time we've stood at this crossroads. In the 1990s, the "Crypto Wars" saw the government attempt to mandate a "Clipper Chip"—a piece of hardware that would give law enforcement a back door into all encrypted communications. The argument then was the same as it is now: we need this to catch the bad guys.

The tech community fought back and won, arguing that a back door for the "good guys" is eventually a front door for the "bad guys." There is no such thing as a secure hole in a wall.

Today, the battle has moved from encryption to the models themselves. The administration’s interest in Anthropic is a modern echo of that old desire for control. They are looking for a way to regulate the "thought process" of the machine.

Microsoft’s legal team is betting that the courts will see this as an overstep of the Executive Branch’s authority. They are leaning on the Fourth Amendment, which protects against unreasonable searches and seizures. In the digital realm, a "search" isn't just a police officer tossing a room; it’s a government algorithm scanning a private database for patterns.

The Human Cost of Cold Code

We tend to talk about these fights in terms of "compliance," "mandates," and "jurisdiction." Those words are designed to be boring. They are meant to make you look away.

But behind those words are people. There are the researchers who worry that their life's work will be "nationalized" by a stroke of a pen. There are the small business owners who wonder if their private data, stored on these same servers, will be swept up in a broad "security" dragnet. And there are the citizens who realize that once the government establishes the right to inspect the "brain" of an AI, they have effectively established the right to monitor any digital process they deem "critical."

The pushback from Redmond is a rare moment of corporate alignment with civil liberties. It’s an admission that even the largest companies in the world are vulnerable to the whims of an administration that views technology as a weapon first and a tool second.

The real danger isn't that the government will find a "red button" inside Anthropic’s code. The danger is the chilling effect. Innovation requires a certain amount of darkness—a private space where engineers can fail, experiment, and build without the weight of state surveillance. When you turn the lights on too bright, everyone stops moving.

The Invisible Border

The digital world was supposed to be borderless. For decades, we operated under the assumption that a server in Virginia was part of a global, open network. That illusion is shattering. We are seeing the rise of "digital borders," where the data inside a machine is treated as a natural resource to be guarded and extracted by the state.

If the administration wins this fight against Anthropic, the result won't be a safer America. It will be a more isolated one.

Talent will move. Capital will shift. The most brilliant minds in AI will look for jurisdictions where their work belongs to them, not the Department of Commerce. We are witnessing a slow-motion divorce between the people who build the future and the people who govern the present.

The hum of the data center continues. It doesn't care about court filings or executive orders. It just processes. But the people who maintain those servers, the people who write the code, and the people whose lives are increasingly managed by these systems are all waiting to see if the vault will hold.

The light under the server room door remains steady, a thin line of white against the dark hallway of the Silicon Valley headquarters. Somewhere inside, an algorithm is learning. The question remains: who gets to decide what it's allowed to know, and more importantly, who is allowed to watch it learn?

The silence of the machine is deceptive. Within it, the future of privacy is being dismantled, one byte at a time, under the guise of protection.

Would you like me to analyze the specific legal precedents Microsoft is using to defend Anthropic's data privacy?