Deep beneath the glass and steel of Menlo Park, a silent hum is changing pitch. It isn't the sound of fans cooling rows of standard-issue processors bought from the global giants. It is the sound of a gamble.

For years, the digital empires we inhabit—Facebook, Instagram, WhatsApp—ran on borrowed brains. Every time you scrolled past a targeted ad or marveled at a translated post, a chip designed by someone else did the heavy lifting. Usually, that "someone" was Nvidia. But the dependency became a chokehold. When the world went mad for generative artificial intelligence, the line for those chips stretched around the metaphorical block. Prices skyrocketed. Supply chains groaned.

Meta, a company built on the premise of connecting billions, found itself disconnected from its own destiny. They were renters in a landlord’s market.

So, they decided to stop renting. They decided to build the forge itself.

The Architecture of an Obsession

Imagine a marathon runner who realizes they can no longer win because their shoes are made for hikers. The shoes are high-quality, certainly, but they aren't optimized for the specific stride, the unique cadence, or the particular terrain of the race being run. To win, the runner must become a cobbler.

Meta’s new "in-house" chip, the Meta Training and Inference Accelerator (MTIA), is that custom-made shoe.

Most people think of AI as a singular, mystical entity. It isn’t. It is a grueling series of mathematical chores. When you open your feed, an AI has to make a trillion micro-decisions in milliseconds. It asks: Does this person want to see a video of a golden retriever or a recipe for sourdough? Will they click this ad for hiking boots? Is this comment a death threat or a sarcastic joke?

Standard chips are jacks-of-all-trades. They are designed to handle everything from high-end gaming graphics to scientific simulations. Meta’s chips are specialists. They are stripped of the "fluff" required for a teenage gamer in Seoul and honed specifically for the ranking and recommendation algorithms that power your digital life.

By custom-tuning the silicon to the software, Meta isn't just seeking speed. They are seeking efficiency. In the world of data centers, heat is the enemy. Power is the cost. A chip that does one thing perfectly uses less "juice" and generates less heat than a general-purpose beast.

The Invisible Arms Race

The timing of this rollout feels like a sudden pivot, but it is actually the climax of a long, quiet war. Only weeks ago, Mark Zuckerberg was touting massive deals to acquire hundreds of thousands of Nvidia’s H100 GPUs. To the casual observer, it looked like a total surrender to the status quo.

In reality, it was a bridge.

You don't build a semiconductor empire overnight. While the world watched the billionaire shopping spree, a separate, smaller team of engineers was obsessing over "inference." Inference is the "live" part of AI. If "training" is teaching a child to read, "inference" is the child actually reading a book in real-time.

Meta still needs Nvidia for the massive, bone-crushing work of training their Llama models. That requires raw, unadulterated power. But for the daily, hourly, second-by-second task of serving content to three billion people? That is where the MTIA steps in.

Consider the logistical nightmare of the old way. Every time Meta wanted to tweak how its AI suggested a "Reel," it had to hope the software would play nice with the generic hardware. Now, the hardware and software are siblings. They speak the same language. They share the same bloodline.

The Human Cost of the "Free" Internet

Why should any of this matter to someone who just wants to see photos of their grandkids?

Because the cost of running the internet is no longer measured in just code. It is measured in megawatts and silicon. As AI models grow more complex, the energy required to run them threatens to break the business models of the "free" web. If it costs Meta five cents in electricity every time you refresh your feed, the company dies.

By building their own chips, they are lowering the "cost of curiosity."

But there is a darker, more human tension here. We are entering an era where a handful of corporations own the entire stack of human experience. They own the social platform (the skin), the algorithms (the brain), and now, the physical atoms of the processors (the skeleton).

When a company owns the chip, they own the limits of what is possible. They can hard-code priorities into the silicon itself. This isn't just about business margins; it's about the fundamental infrastructure of human interaction. We are moving toward a world where the medium isn't just the message—the silicon is the gatekeeper.

The Ghost in the Silicon

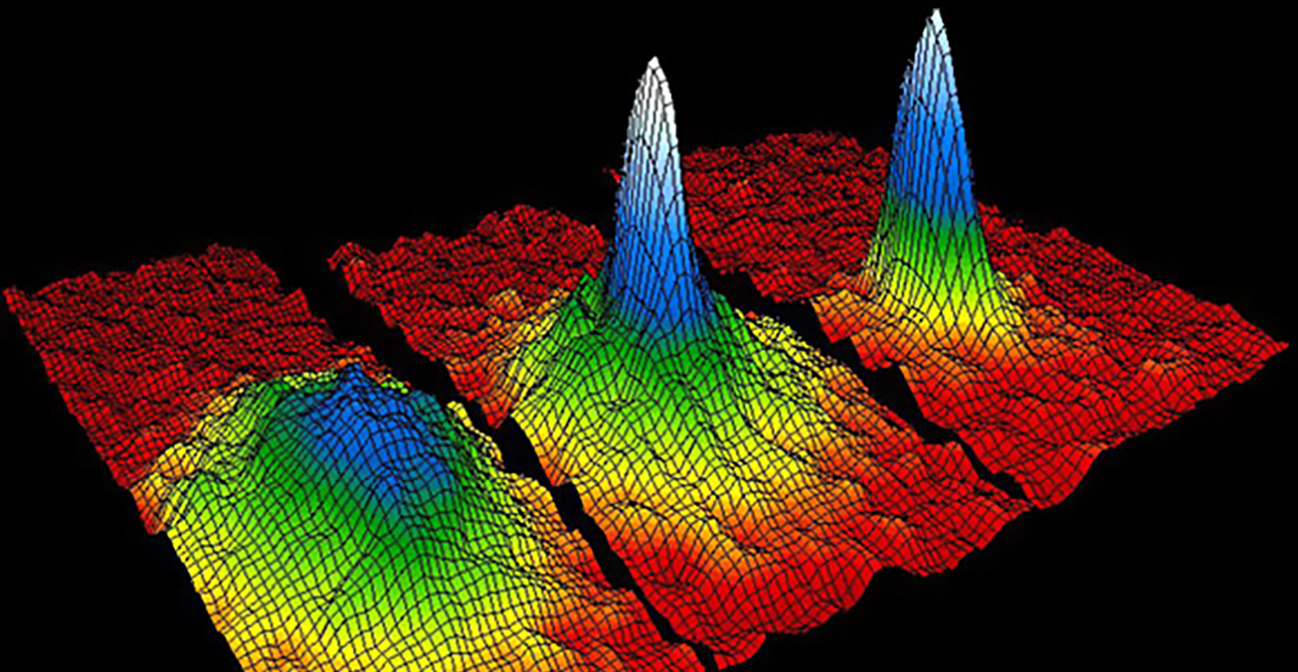

Think of a hypothetical engineer named Sarah. Sarah has spent three years staring at "floorplans"—the blueprints of where transistors sit on a piece of silicon the size of a fingernail. She knows that if she moves a specific memory block three microns to the left, she can shave 0.001 milliseconds off the time it takes for a user in rural India to see a translated post.

To Sarah, this isn't a "rollout." It's a masterpiece. It's the culmination of thousands of hours of simulation, of failed yields, and of late-night "war rooms" when the first prototypes came back from the foundry with errors.

When the news reports "Meta rolls out in-house chips," they are reporting on a balance sheet. They aren't reporting on the sheer, terrifying audacity of a software company trying to master the physical world of lithography and extreme ultraviolet light.

Semiconductors are the most difficult things humans have ever learned to make. They require a level of precision that makes brain surgery look like blunt-force trauma. Meta isn't just competing with Google or Amazon; they are competing with the laws of physics.

The Fragile Independence

There is a vulnerability in this strength. By moving away from the "industry standard," Meta is stepping out onto a tightrope without a net. If the MTIA chips fail, or if they fall behind the blistering pace of Nvidia’s innovation, Meta will have wasted billions on a bespoke paperweight.

They are betting that their specific needs are so unique that "off-the-shelf" will never be good enough again. It is a declaration of sovereignty.

The industry calls this "vertical integration." It sounds sterile. In truth, it is an act of survival. In a world where AI is the new oil, Meta has decided it can no longer afford to buy its fuel from a gas station owned by a competitor. They are drilling their own wells.

The End of the Beginning

The hum in the data centers is different now.

It is more focused. More intentional. More Meta.

We are no longer just users of a platform. We are the fuel for a machine that owns its own brain. The "rollout" isn't a press release; it's the quiet clicking of three billion lives being processed on silicon that was born from the same company that keeps us scrolling.

The invisible stakes are no longer just "who wins the AI race." It's whether the very tools of our communication are becoming so specialized that they can no longer be understood by anyone outside the walls of the palace.

We are the passengers on a ship where the captain has just built the engine from scratch. It is faster. It is more efficient. But it is also more mysterious than it has ever been.

The silence of the silicon is where the real story lives.

Would you like me to analyze the specific technical benchmarks of the MTIA chips to see how they compare to the Nvidia H100?